(Each says it only tested the technology and was never a client.) I discovered that the company had made the app available to investors, potential investors and business partners, including a billionaire who used it to identify his daughter’s date when the couple unexpectedly walked into a restaurant where he was dining.Ĭomputers once performed facial recognition rather imprecisely, by identifying people’s facial features and measuring the distances among them - a crude method that did not reliably result in matches. BuzzFeed published a leaked list of Clearview users, which included not just law enforcement but major private organizations including Bank of America and the N.B.A. Indeed, when the public found out about Clearview last year, in a New York Times article I wrote, an immense backlash ensued.įacebook, LinkedIn, Venmo and Google sent cease-and-desist letters to the company, accusing it of violating their terms of service and demanding, to no avail, that it stop using their photos. Helping to catch sex abusers was clearly a worthy cause, but the company’s method of doing so - hoovering up the personal photos of millions of Americans - was unprecedented and shocking. That was by design: The government often avoids tipping off would-be criminals to cutting-edge investigative techniques, and Clearview’s founders worried about the reaction to their product. “There was no way we would have found that guy.”įew outside law enforcement knew of Clearview’s existence back then. (Such crimes fall under the agency because, pre-internet, so much abuse material was being sent by mail internationally.) “It was an interesting first foray into our Clearview experience,” said Erin Burke, chief of H.S.I.’s Child Exploitation Investigations Unit. The case represented the technology’s first use on a child-exploitation case by Homeland Security Investigations, or H.S.I., which is the investigative arm of Immigrations and Customs Enforcement. (Viola’s lawyer did not respond to multiple requests for comment.)Īt the time, the use of Clearview in Viola’s case was not made public I learned about it recently, through court documents, interviews with law-enforcement officials and a promotional PowerPoint presentation that Clearview made. He later pleaded guilty to sexually assaulting a child and producing images of the abuse and was sentenced to 35 years in prison. Law-enforcement officers arrested Viola in June 2019. His profile was public browsing it, the investigator found photos of a room that matched one from the images, as well as pictures of the victim, a 7-year-old. Another investigator found Viola’s Facebook account. The agent contacted the supplements company and obtained the booth worker’s name: Andres Rafael Viola, who turned out to be an Argentine citizen living in Las Vegas. On Instagram, his face would appear about half as big as your fingernail. But upon closer inspection, you could see a white man in the background, at the edge of the photo’s frame, standing behind the counter of a booth for a workout-supplements company. The suspect was neither Asian nor a woman. The app turned up an odd hit: an Instagram photo of a heavily muscled Asian man and a female fitness model, posing on a red carpet at a bodybuilding expo in Las Vegas. To hear more audio stories from publishers like The New York Times, download Audm for iPhone or Android. This dwarfed the databases of other such products for law enforcement, which drew only on official photography like mug shots, driver’s licenses and passport pictures with Clearview, it was effortless to go from a face to a Facebook account.

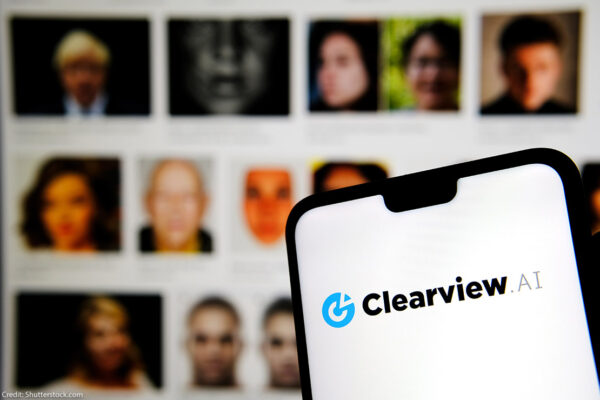

The team behind it had scraped the public web - social media, employment sites, YouTube, Venmo - to create a database with three billion images of people, along with links to the webpages from which the photos had come.

When an investigator in New York saw the request, she ran the face through an unusual new facial-recognition app she had just started using, called Clearview AI. The agent sent the man’s face to child-crime investigators around the country in the hope that someone might recognize him. The man appeared to be white, with brown hair and a goatee, but it was hard to really make him out the photo was grainy, the angle a bit oblique. One showed a man with his head reclined on a pillow, gazing directly at the camera. Found by Yahoo in a Syrian user’s account, the photos seemed to document the sexual abuse of a young girl. In May 2019, an agent at the Department of Homeland Security received a trove of unsettling images. When a secretive start-up scraped the internet to build a facial-recognition tool, it tested a legal and ethical limit - and blew the future of privacy in America wide open.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed